New Balance | Critical DfAM and the translucent giant

Written by Onur Gun

Published on March 1, 2021

Designers are curious creatures who have been messing with computers for a very long time. In this article, I'll explore how lessons learned in my earlier days of working with parametric modeling and environmental analysis tools for architecture projects have informed my designing for AM today.

Parametric modeling, environmental analysis tools, and architecture

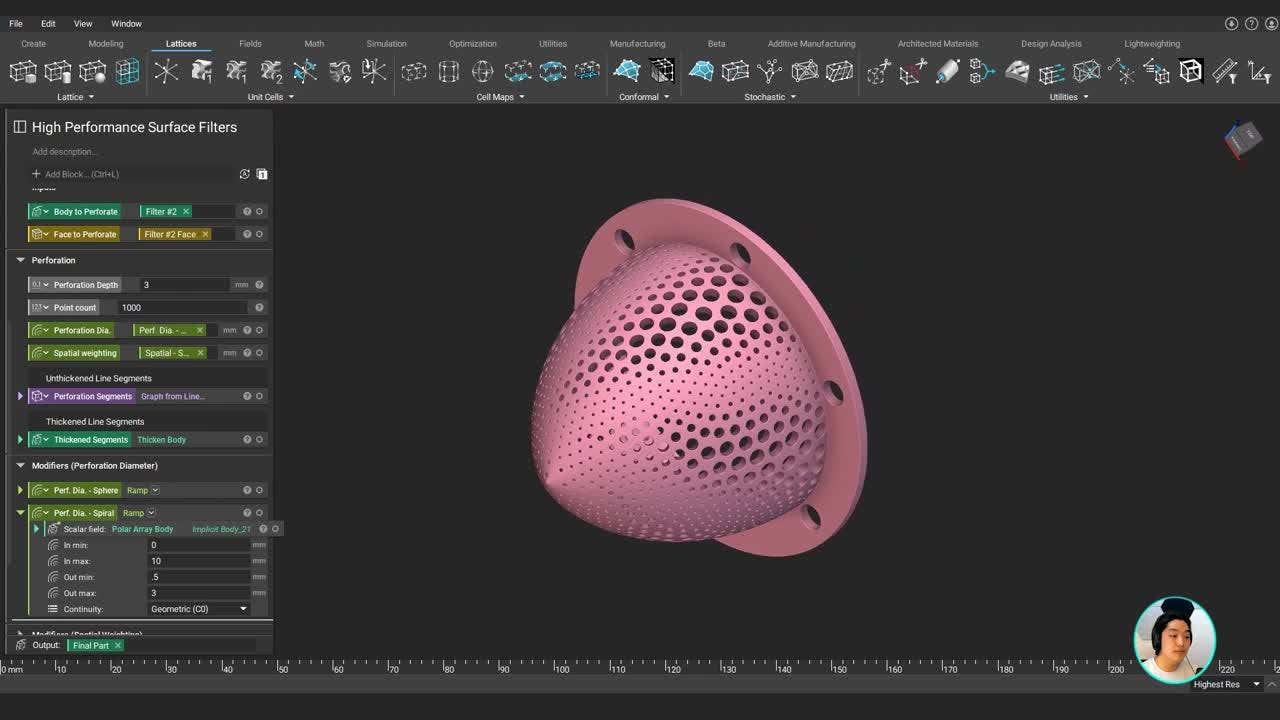

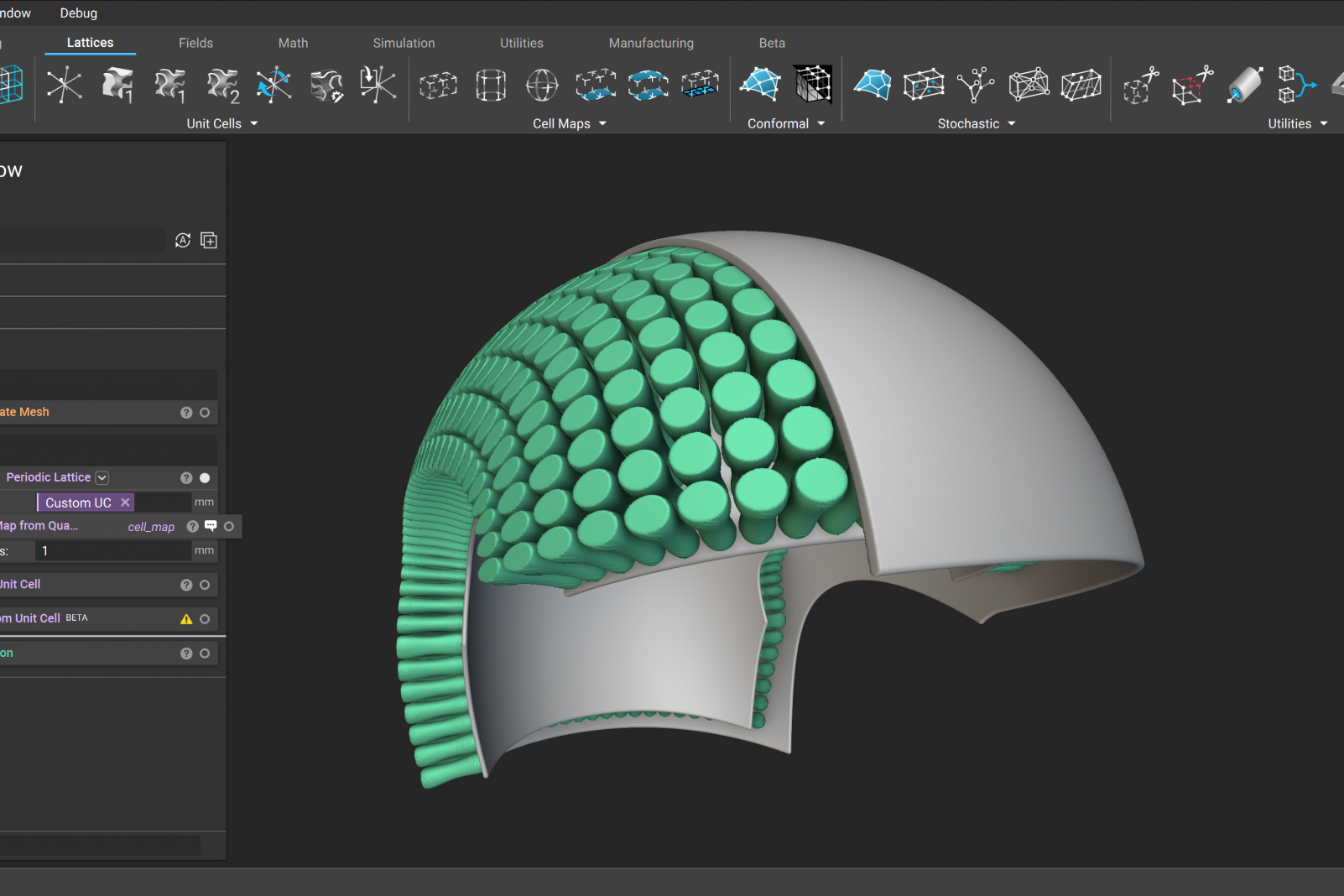

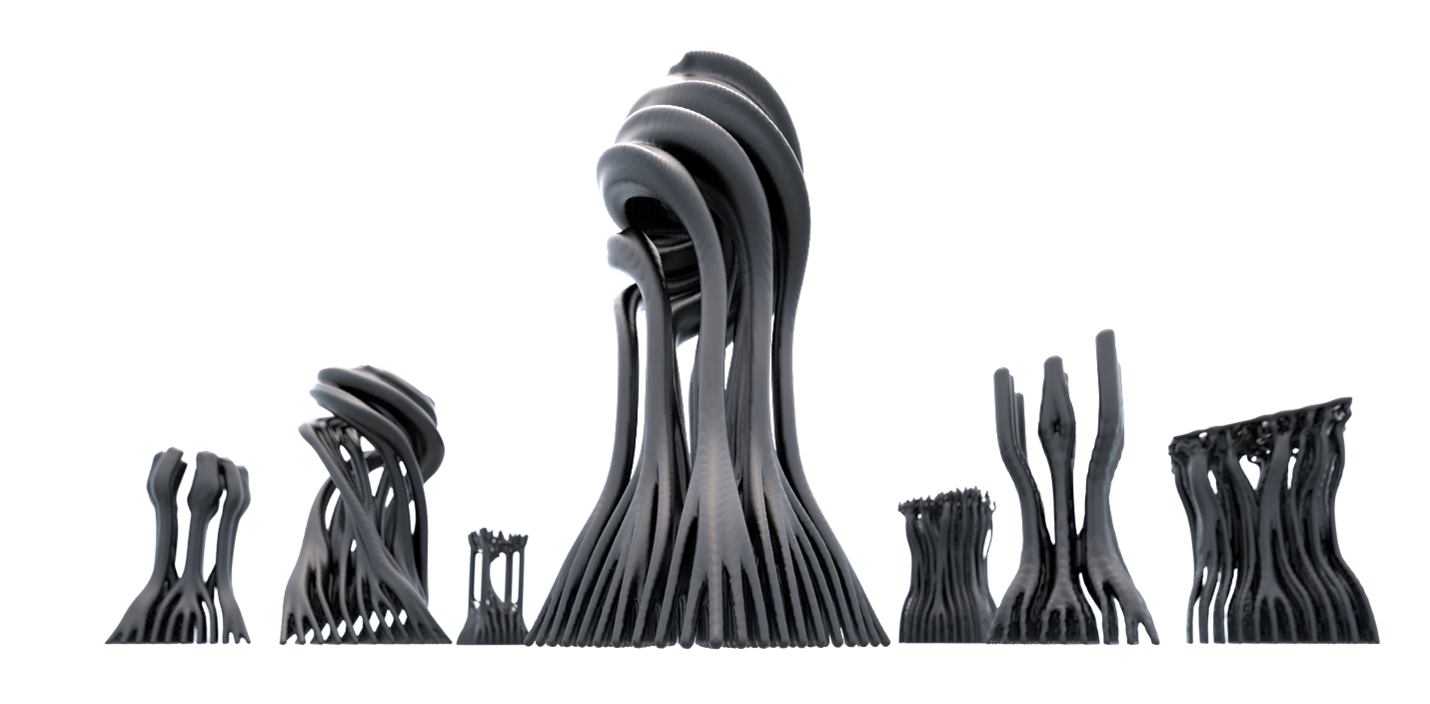

Artifacts for learning: Designed, rendered, 3D-printed and perception tested to develop a better understanding of forms and mechanical properties of materials.

As computational technologies became more accessible and simulations started taking off around the beginning of the Millenium, architects discovered another field of play: parametric modeling and environmental analysis tools. These tools became a hot duo, because the environmental analysis tools helped render colors of information (solar exposure or visibility simulations) and the parametric modeling helped generate shapes (patterns) that responded to those.

Fifteen years ago, implementing and utilizing these types of tools was a part of my responsibility. We tried our best to make simulations run but the tools and processes were clumsy and the designers had to do more to implement technical investigations. Additionally, we constantly reminded architects and clients that the results should only be taken as suggestions not ultimate solutions, because everybody was all too ready to be convinced by the pretty colors rendered by simulations.

On the conceptual side, I found this opportunity fascinating for developing a basic intuition about the environmental conditions of a prospective building site and yet on the flip side, this magical method quickly became a trick that lacked the necessary investigation, evidence and proof of its result in the real world.

Sometime around 2006, I saw one of the 'greenest' buildings emerge in NYC that later missed its environmental impact targets by a huge margin. (I am purposely leaving out the name of the building, however, a quick online search would be your friend if you are curious.)

Okay, so you might now be wondering what any of this has to do with design for additive manufacturing (DfAM)? I’ll answer that for you now.

Critical DfAM

3D printers are now (almost) everywhere and will keep spreading. No- not everybody is printing their coffee mugs at home as once speculated and that would be a waste of resources anyway.

But there has been a clear transformation in the utilization of 3D printers as all aspects of DfAM (the machines, materials, and software platforms) have shifted their focus from prototyping to manufacturing.

And yes, as you might have guessed or already know, today we have better digital design tools that help generate sophisticated shapes and run numerous simulations that yield cool colors. This is again, a great opportunity, but only if we can be suspicious and inquisitive about what we see and remain investigative in what we do.

Enter critical DfAM. Simulations are not necessarily an accurate representation of reality. You already knew this; however, stating it again once again has a point.

Data is not real.[i] It is something that we make up so that we can analyze, describe, and understand things. Alternative descriptions are always possible.[ii]

If you are running a simulation using data and producing other sorts of data at the end – you need to have many checkpoints along the way. These checkpoints are where you pit your simulation results against what happens in the real world while also investigating the feasibility of the input and output data.

All this happens by making actual things and developing an understanding of them through mechanical tests as well as physical and tactile interactions. These types of embodied interactions are simply irreplaceable, no matter how ‘intelligent’ your computational system is.[iii]

Sometimes, it is OK to do things because you simply can. Meandering in the digital realm helps rapid development and visualization of ideas. It also keeps your creativity running.

Realizing things require responsibility. Our design intentions can only be verified by direct interactions. Our perceptions matter as much as numeric drivers, if not more.

The translucent giant

In a recent additive manufacturing meeting, I called nTop the Translucent Giant and got puzzled faces.

nTop is an ever-evolving engineering software that is aiming to offer a complete conception to realization workflow for DfAM, without stressing your CPU and GPU indefinitely and hopefully reducing your stare-at-the-progress-bar time.

This is easy to say and yet hard to visualize, because such a process includes many (foreseeable and unforeseeable) steps including but not limited to modeling, simulating, optimizing, importing and exporting, informing, culling, and so on.

Remember: we are not redoing what we have been doing by using emergent modeling and 3D printing technologies. In fact, we are rediscovering the ways of designing and manufacturing, through changing processes, part definitions and eventually designs.[iv]

In this respect, nTop becomes a giant, trying to handle all these operations within its expansive body.

Yet on the other side, it tries to do so by diluting many complexities in the background, and on its display. You can choose some operations to be visible or not at any given time, depending on the visual information you are trying to access. The field-driven modeling mindset is well reflected in the viewport rendering, so you can think in terms of distances, colors, and -yes- fields, along with conventional boundary representations (BReps).

So your model becomes conceptually 'translucent', and you see as much as you want.

Enter translucent giant.

I am curious to see which direction nTop evolves towards. I will be one of the demanding users, probably demanding improvements and additions for the design tools—and challenging the team to help me generate the model seen in this article with nTop’s native tools.

However, that might not be the ultimate goal for nTop. A giant should be sizable but not over-sized. In this respect, I see nTop as a powerful addition to a wisely prepared toolset. For now, I will investigate how the simulation engine’s results align with reality.

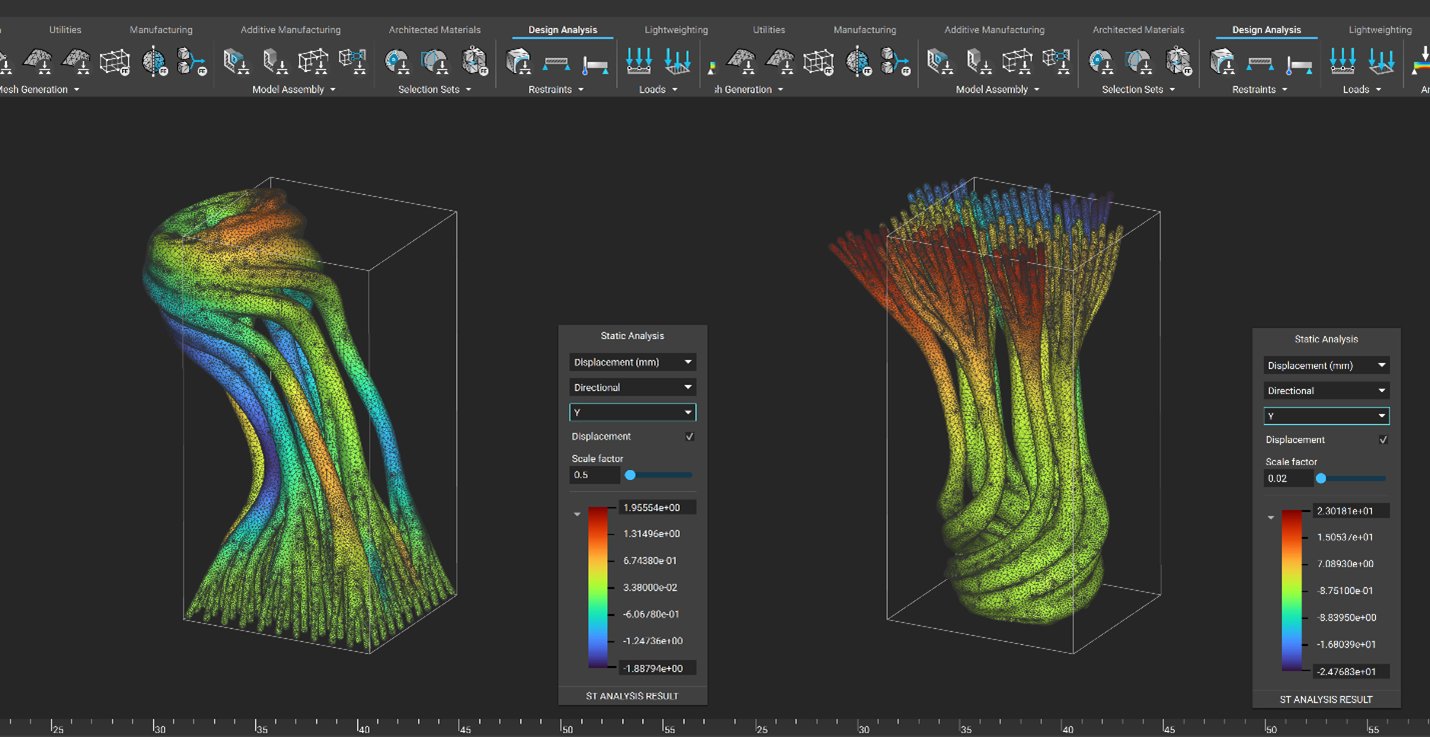

“Those who do not fear the sword they wield have no right to wield a sword at all.” If you want to understand how a computational tool really works, start with something you already know. Here I am comparing FEA simulation results to what happens in reality. That is helping me determine the misalignments between the simulation and real-world occurrences so that I can adjust my parameters to get a more convincing result in the next round.

Value-driven mindset

The development of DfAM platforms is creating unprecedented solutions for ever-evolving digital manufacturing technologies. But such rapid development is bringing about the need for more awareness and criticality that cannot be gained at the processing speed of computers.

Let computers run but also invest in yourself: as much as intelligence you also need to gain insight. Make a lot, fail early, and move forward so you can crunch the numbers in the right way. Find ways to develop designs that add value, not noise.

Always remind yourself that the computer screen, numbers, and colors might become deceptive. But regardless, use them to their fullest potential and keep exploring the uncharted realms, so we can produce better performing products, a more sustainable mindset, and create better values that respond to our human needs overall.

See how others have leveraged state-of-the-art technology taking into account the simulation results vs. real world in this Journey Through Advanced Manufacuturing Webinar Series.

[i] What is DATA? Data as a Tool for Describing in the Contexts of Art, Architecture and Footwear Design

[ii] Pirsig, Robert M. Zen and the Art of Motorcycle Maintenance: An Inquiry Into Values. 1R edition. New York, N.Y.: William Morrow Paperbacks, 2005

[iii] Dreyfus, H. L.: What computers still can’t do: A critique of artificial reason, The MIT Press, Cambridge, Mass, ix, 235 (1992)

[iv] How 3D Printing is Changing Part & Whole Theories in Design?

Onur Gun

Onur Yüce Gün is a seasoned computational designer and instructor whose expertise helped him bring non-standard designs in extreme scales, from skyscrapers to minuscule 3D printed lattices, into life. Onur instituted the Computational Geometry Group at Kohn Pedersen Fox New York (KPF) in 2006. His computational architecture work got published in Elements of Parametric Design. In 2009, he developed the curriculum and directed İstanbul Bilgi University’s undergraduate program in architecture. He taught at MIT, RISD and Adolfo Ibáñez University in Chile, and acted as a mentor at numerous schools and workshops around the globe. Trained as an architect, Onur holds a Ph.D. and a Masters in Design and Computation, both earned at MIT. He is currently the Creative Manager of Computational Design at New Balance. He develops computational design workflows and futuristic concepts with a specific concentration on DfAM (design for additive manufacturing).